Production Architecture

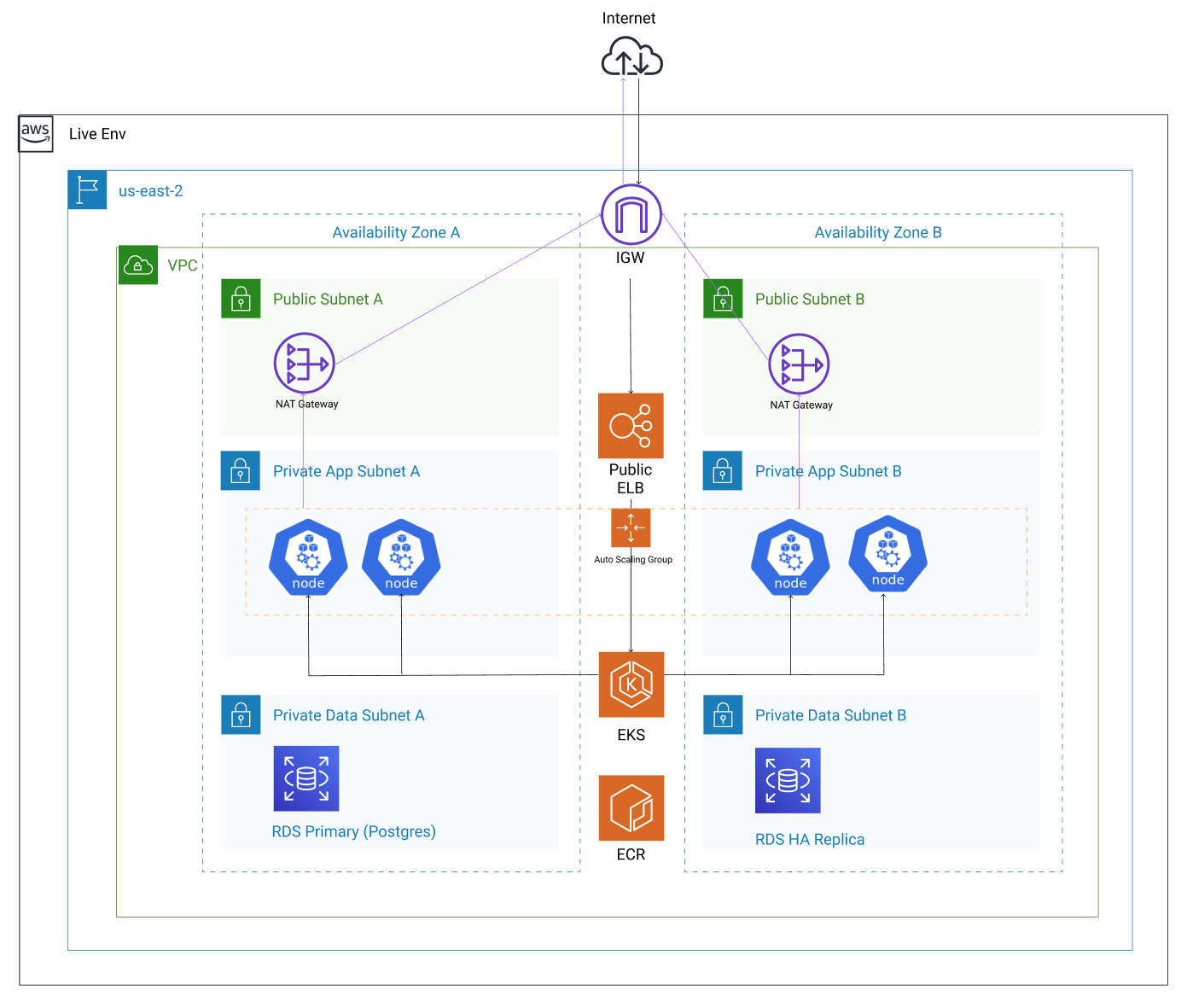

Our Brmbl.io core infrastructure is primarily hosted in Amazon Web Service’s (AWS) us-east-2 and ap-southeast-2 regions (see Regions and Zones)

This document does not cover servers that are not integral to the public facing operations of Brmbl.io.

Current Architecture

Brmbl.io Production Architecture

Source (Bramble internal use only)

Our infrastructure leverages Kubernetes for Brmbl.io, encouraging stateless Twelve-Factor services, running in a cloud-native environment at scale.

Cluster Configuration

Brmbl.io uses several Kubernetes clusters for live (production) environments with similarly configured clusters for non-live e.g. staging.

Clusters are primarily managed Amazon EKS, ensuring high availability, scalability and security.

The following projects are used to manage the installation:

- brmbl-io-infra: Pulumi configuration for the cluster, all resources necessary to run the cluster are configured here including the AWS infrastructure, cluster, node pools, service accounts, IP address reservations, Load Balancers, Ingresses and Deployments.

Node pools are used to isolate workloads for different services running in the cluster and are defined in Pulumi.

Monitoring and Logging

Monitoring for Brmbl.io runs in the same cluster as the application. Prometheus and Grafana are configured using helm charts in the namespace cluster-svcs, and every cluster has its own Prometheus which gives us some sharding for metrics.

Alerting for the cluster uses generated rules that feed up to our overall SLA for the platform.

Logging is configured using Amazon Cloudwatch where the logs for kubernetes, and every pod are forwarded.

Cluster Configuration Updates

There is a single namespace brambl-app that is used exclusively for the Bramble application.

Configuration updates are set in the infra project where there are Pulumi stacks that set defaults for the Brmbl.io environment with per-environment overrides.

Changes to this configuration are applied by the SRE and Delivery team after a review using a MR review workflow.

For namespaces in the cluster for other services like logging, monitoring, etc. a similar GitOps workflow is followed in the infra repository.

Bramble Application Updates

When an application update is ready, the CI pipeline triggers an infra pipeline that updates the application image in the cluster.

For any image that we do not build ourselves, these may be pulled from GitLab Container Registry, and our own managed AWS ECR.

Database Architecture

We use Postgres on Amazon RDS, in a highly available configuration, with standby replicas, and regular snapshots.

DNS

We host our DNS with DNSimple, however an Amazon Route53 migration is being considered.

TLD Zones

When it comes to DNS names all services providing Bramble as a service shall be in the brmbl.io domain.

Remote Access

Access is granted to only those whom need access to production through bastion hosts.

Instructions for configuring access through bastions are found in the bastion runbook.

Monitoring

See how it’s doing, for more information on that, visit the monitoring handbook.